NVIDIA Sionna: An AI-Native 6G Wireless System Design Tool

Introduction

In Wireless Engineering, the model based communication systems evolution to data-driven AI-native architectures is a most significant paradigm shifts. Traditional simulation frameworks like MATLAB based link-level simulators struggle to integrate gradient-based optimization and deep learning workflows.

NVIDIA comes up to addresses this gap with an AI-Native 6G Wireless System Design Tool knwon as Sionna, which is a GPU-accelerated, differentiable, end-to-end communication system library built on modern Machine Learning frameworks.

Key Pointers for Sionna

- Sionna is an open source platform, Freely available for research, experimentation, and rapid prototyping.

- Built on Python, TensorFlow, and Keras which allows developers to create end-to-end communication pipelines similar to neural networks.

- It models the entire communication chain as a single differentiable system for joint optimization.

- Includes 5G PHY standardized components like LDPC, Polar codes, OFDM, and MIMO.

- Supports realistic wireless channels such as AWGN, Rayleigh, and 3GPP models.

- Sionna RT Tool provides ray-tracing-based RF simulation for real-world propagation environments.

- Uses NVIDIA GPUs to significantly speed up large-scale wireless simulations.

- Its modular architecture offers reusable and customizable blocks for flexible system design.

- 6G Research Ready supports emerging, use cases like RIS, NTN, and AI-driven air interfaces.

- AI-Native Design enables neural receivers and learned communication strategies using deep learning.

Why we need Sionna now?

We need NVIDIA Sionna kind of tool over traditional communication tools like MATLAB due to following reasons.

- NVIDIA Sionna is needed because traditional communication tools like MATLAB follow a block-by-block, non-differentiable approach, whereas Sionna enables end-to-end optimization of the entire system.

- Legacy tools rely on fixed mathematical models, but Sionna integrates with TensorFlow to support AI-driven and learnable communication components.

- Simulation speed in legacy systems is often CPU-bound and slow, while Sionna uses GPU acceleration for massive parallel simulations.

- Traditional tools have limited support for real-time AI experimentation, whereas Sionna allows neural receivers and adaptive communication strategies.

- Channel modeling in legacy tools is mostly analytical, while Sionna extends to ray-tracing and realistic environment-based simulations.

- Overall, Sionna provides a modern, scalable, and AI-native framework, making it far more suitable for 5G/6G research compared to legacy tools.

Table below show Traditional System vs Sionna Desing Approach

| Aspect | Traditional System Approach | Sionna System Approach |

|---|---|---|

| Optimization | Block-wise | End-to-end |

| Channel | Analytical | Differentiable |

| Receiver | Rule-based | Learnable |

| Tools | MATLAB | Python + TensorFlow |

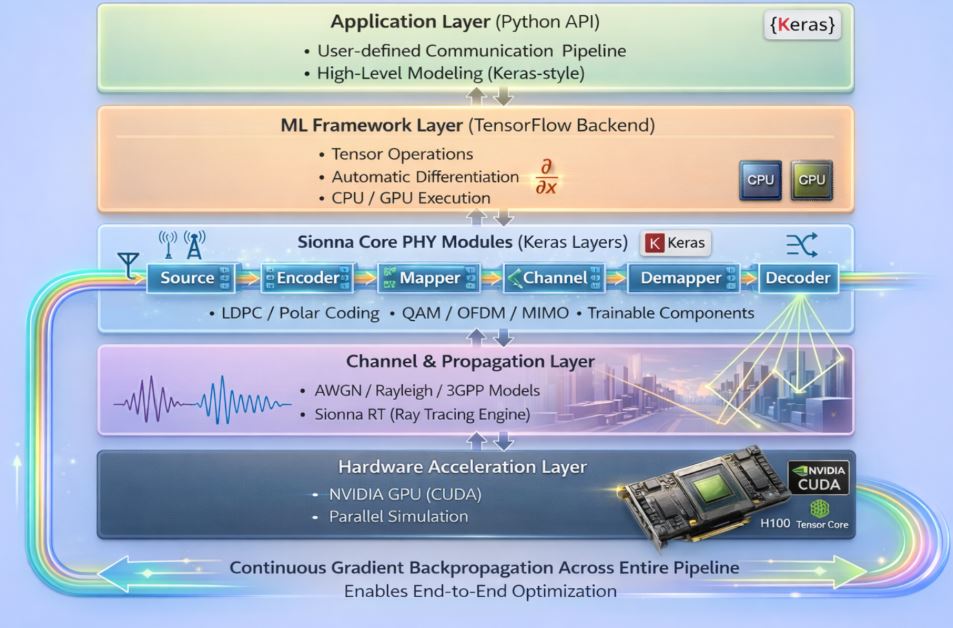

Sionna Reference Working Architecture

NVIDIA Sionna use a layered architecture for modern wireless communication systems to build an end-to-end differentiable pipeline.

- Application Layer is the top most layer which provides a Python-based interface where users can define communication workflows using high-level, Keras-style modeling.

- ML Framework Layer is powered by TensorFlow, which manages tensor operations, automatic differentiation, and execution across CPU or GPU.

- Sionna 5G/6G PHY Modules is the core of the system, it is implemented as modular layers such as source, encoder, mapper, channel, demapper, and decoder, which together form the complete communication chain supporting technologies like LDPC coding, OFDM, and MIMO. Below this, the Channel & Propagation Layer models real-world wireless environments using AWGN, fading, 3GPP models, and advanced ray-tracing via Sionna RT.

- Hardware Acceleration Layer leverages NVIDIA GPUs for high-speed parallel simulations. A key highlight of the architecture is the continuous gradient backpropagation across the entire pipeline, enabling end-to-end optimization and integration of AI-driven techniques into communication system design.

Frequently Asked Question on Sionna

Below are some frequently asked questions about Sionna. This list will continue to evolve as more relevant queries arise.

Q1. What is Sionna?

Sionna is a wireless communication and physical-layer simulation library built on TensorFlow, offering modular PHY components for research, education, and AI-driven system design.

Q2. What can Sionna simulate?

Sionna supports simulation of modulation, channel coding, OFDM, MIMO systems, channel models, detection, equalization, link-level performance, and fully differentiable end-to-end communication systems.

Q3. Is Sionna Python-based?

Yes, Sionna is fully Python-based at the user level, while TensorFlow handles high-performance execution using optimized C++ and CUDA backends.

Q4. Do I need TensorFlow to use Sionna?

Yes, TensorFlow is a mandatory dependency since Sionna relies on its tensor operations, automatic differentiation, and device management capabilities.

Q5. Do I need a GPU to run Sionna?

No, Sionna can run on a CPU; however, for large-scale simulations (e.g., OFDM, MIMO, or ML training), a GPU is highly recommended for better performance.

Q6. What are the system requirements for Sionna?

Minimum (CPU-only):

- 64-bit Linux system (Ubuntu recommended)

- Python 3.8–3.12

TensorFlow (CPU version) - Minimum 8 GB RAM

- CPU with AVX/AVX2 support

Important Note on AVX: TensorFlow requires AVX/AVX2 instructions; older CPUs or environments lacking AVX support cannot run Sionna.

Recommended (GPU-accelerated):

- NVIDIA GPU (Turing, Ampere, Ada, Hopper or newer)

- Compatible CUDA Toolkit and cuDNN

- At least 16 GB GPU memory for large workloads

- Linux or WSL2 environment

Q7. Can Sionna run inside VirtualBox?

Sionna can run in VirtualBox using CPU, but GPU acceleration is not supported due to lack of CUDA passthrough. Performance may also be slower due to virtualization overhead and limited AVX exposure.

Q8. Is Sionna similar to MATLAB 5G Toolbox?

Conceptually yes, but tools like MATLAB are standards-focused, whereas Sionna enables differentiable and AI-native communication system design.

Q9. Does Sionna automatically use GPUs?

Yes, if TensorFlow detects a compatible CUDA-enabled GPU, Sionna automatically utilizes it without requiring code changes.

Q10. Can neural networks be integrated into Sionna?

Yes, Sionna is specifically designed for ML-based PHY development, allowing seamless integration of neural receivers, learned channel estimators, and end-to-end trained systems.

Q11. Is Sionna suitable for 6G research?

Yes, Sionna is widely used in 6G research areas such as AI-native PHY, learned waveforms, neural decoders, and advanced channel modeling.

Q12. Is Sionna open-source?

Yes, Sionna is an open-source library released by NVIDIA, making it accessible for both academic and industrial research.